What Is Tokenization?

Tokenization is the process of replacing sensitive data, such as a credit card number or payment data, with a token. A token is a string of randomized data with no meaning or value. But unlike encrypted data, tokenized data is undecipherable and, most of the time, irreversible since there is no relationship between the token and its original number. With the tokens acting simply as an identifier for the valuable information it’s standing in place of, the information effectively becomes impenetrable protected data.

This allows organizations to safely use pertinent information for uninterrupted business operations while safely storing important data outside the organization’s internal systems — effectively devaluing all data stored within an organization and making it unusable in the event of a data breach.

There are several technologies available to tokenize data so that hackers get nothing of value. Here we take a deeper dive into tokenization and when to use it, how it works, the types of data it can protect, the types of tokenization available and how tokenization can work for online data.

The origins of tokenization

TrustCommerce was the first company to formulate the concept of tokenization in 2001. When their client needed a way to reduce the risks of storing cardholder data, they created something called TC Citadel — the first iteration of what we now know as data tokenization. This first iteration allowed for safe and secure payment transactions without the need to store cardholder data on their servers.

The solution proved to be extremely secure, since it ensured hackers would only receive indecipherable strings instead of valuable payment information. Major credit and debit card companies have since adopted this method, which has become an industry norm in the Ecommerce landscape.

Why Is Tokenization Used?

Invented 21 years ago, tokenization continues to be one of the best methods to outsmart hackers and protect sensitive information stored online. In fact, the global tokenization market is estimated to increase at a compound annual growth rate (CAGR) of 21.5% over the next five years.

This increase in demand shows just how important data security has become, especially in terms of customer trust and loyalty. The increased rise of data breaches exposing unsecured or “clear-text” Personally Identifiable Information (PII), Protected Health Information (PHI) and financial information has raised serious questions about how consumer data is handled. Breaches bring liability, legal action and millions in fines, while eroding consumer trust and damaging the corporate brand.

With 63% of consumers indicating that an organization’s data collection and storage practices are the most important factor when they share sensitive information with the organization, installing defensive data breach measures, like tokenization, is critical.

What Is Tokenization?

Like previously stated above, tokenization is the process of removing sensitive information, like social security numbers and credit card and payment information, from an organization’s internal system — where it’s vulnerable to hackers — and replacing it with a one-of-a-kind token that is unreadable, even if hackers manage to breach your systems. A token is a random sequence of numbers or letters that the organization’s internal systems can use at little risk while the original data is held securely in a token vault.

Rather than becoming outdated, tokenization has only become more critical in the current security landscape. In today’s IoT world, we’re creating a staggering 2.5 quintillion bytes of data daily — through social media, healthcare, eCommerce and more — and more data means more need for protection. Along with an increase in data, the continued surge in data cybercrimes has also created a high demand for tokenized data security and cloud-based solutions.

With 80% of consumers saying they would avoid purchasing from an organization if their data has been compromised in a security breach, tokenization could save you millions of dollars, not just from a data breach, but also from the loss of customer trust and loyalty.

What Is Detokenization?

The process of detokenization is exchanging the token for the original data. Tokenization is often a permanent process, but if detokenization is required, it can only be done by the original tokenization system. It’s impossible to obtain the original number from just the token.

PCI DSS Compliance

One of the easiest ways for businesses to comply with standards set by the Payment Card Industry Security Standards Council (PCI SSC) is with tokenization. The PCI DSS sets security requirements for businesses that handle payment card data to ensure compliance with strict cybersecurity standards and ensure proper data protection from third parties.

When it comes to protecting payment data, businesses may use a form of tokenization called network tokenization, which is a type of payment tokenization offered by major payment networks — Visa, MasterCard, Discover and more — that replace primary account numbers (PANs) and other card details with a token issued by the card brand.

While securing payment card data with encryption is allowed per PCI DSS, merchants may find it easier to implement tokenization to protect data and meet compliance standards. But because storing and maintaining payment data is often complex, high-performing and ever-changing, tokenization is often a much easier process to add than encryption.

What does tokenization protect?

Historically, tokenization has been deployed to protect credit card and debit card data in storage. Storing this data is essential for recurring and subscription transactions so that the customer can be billed on a regular basis without having to re-enter their information. Storing clear-text credit and debit card data in any form violates PCI DSS compliance rules, as well as data privacy rules.

In recent years, tokenization has been expanded to now also “mask” PII, PHI and banking information, especially pertaining to ACH account details. Examples of the type of data a tokenization system can secure include:

- A real name or alias, signature, or physical characteristics or description

- Postal address or telephone number

- Unique personal identifier, account name, online identifier Internet Protocol address, or email address

- Education and employment, including employment history

- Social security number, driver’s license number, state identification card number, passport number, or other similar identifiers

- Medical information or health insurance information

- Bank account number, credit/debit card number, payment details, or any other financial information

Additionally, tokenization is no longer exclusively used for protection of data in storage. It can also be used to immediately tokenize data upon entry into a web form or Ecommerce page.

What types of tokenization are available?

There are two main “systems” used to store tokens.

Vaulted tokenization

Vaulted tokenization typically involves creating a token mapping table, where sensitive data is centralized on that table. This reduces the number of systems processing and/or storing sensitive data, thereby reducing the risk of the overall environment by centralizing that risk in the systems storing and managing the mapping table. Since the mapping table becomes the most desirable target, sensitive data stored in the token mapping table should be encrypted and the secret key managed securely.

Vaultless tokenization

Vaultless tokenization refers to tokenization which does not require a token mapping table and relies on an algorithm to transform data into tokens. Implementations deemed secure using this method rely on industry approved algorithms and modes of operation and, when outsourced, can be implemented such that compromise of the tokenized (encrypted) data renders zero value to the malicious actor. As with standard encryption, a malicious actor in possession of tokenized data without a secret key has no means to recover the clear-text data.

Vaultless tokenization provides the easiest way to secure an organization’s data: the general population of the organization’s systems never see the original data strings, and only a very few and limited amount of heavily controlled systems are allowed to transform tokens back to sensitive data.

How does tokenization look?

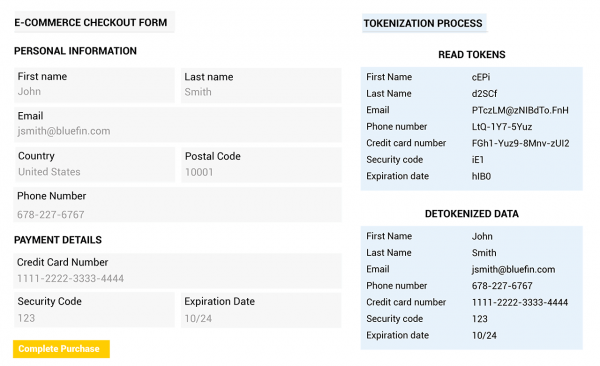

It can be hard to envision what a token is or how this process looks. However, here is an example of an Ecommerce page where format-preserving tokenization (FPT) has been applied with Bluefin’s ShieldConex® data security platform. In format-preserving tokenization, the randomly generated symbols use the same alphabet as the original data.

It can be hard to envision what a token is or how this process looks. However, here is an example of an Ecommerce page where format-preserving tokenization (FPT) has been applied with Bluefin’s ShieldConex® data security platform. In format-preserving tokenization, the randomly generated symbols use the same alphabet as the original data.

ShieldConex® is a vaultless tokenization platform that secures PII, PHI, cardholder data (CHD) and ACH account data entered online. ShieldConex immediately masks sensitive data upon entry through Bluefin’s iFrame or API’s, ensuring that it never travels through a system or network as clear-text, where it could be accessible in the event of a data breach. Clients can leverage format-preserving encryption (FPE), format-preserving tokenization (FPT) or a combination of both with ShieldConex.

You can learn more about tokenization and its difference from encryption, or check out our white paper by QSA Foregnix for a deeper dive into tokenization.

Protect Your Data from Security Threats

With the ever present threat of cyber attacks, get peace of mind with Bluefin’s omnichannel, integrated security solutions.

Bluefin specializes in PCI-validated P2PE and tokenization security that work in tandem to secure sensitive data, including payment information and Personally Identifiable Information (PII). Our solutions provide organizations in retail, healthcare, higher education, government, nonprofit and more with flexible options to devalue all data upon intake and transit in storage.

Learn more about our solutions for payment security and data security, or contact us today for a free consultation with our Security Solutions team.